| Conductor Details |

It may be the case that the network characteristics are acceptable to both the client and server applications and to the user. However, when network conditions are poor, applications typically do not degrade gracefully. Rather than providing degraded functionality, when network capabilities fall below the expected level, most applications provide no functionality at all. This lack of graceful degradation is of particular concern to mobile computing, for which network characteristics can vary over many orders of magnitude.

There are two common approaches to handling limited resources: reservations and adaptation. In a reservation-based system, the network will dedicate, to an application, the resources necessary to provide a particular level of service. The application must indicate the desired service characteristics. The network will then attempt to reserve the resources required to provide this level of service. The application will wait for the reservation to be successful, before providing any service. This scheme provides either perfect service or no service.

An adaptation-based system, on the other hand assumes that the user is not willing to wait for the required resources to become available and is instead willing to accept degraded service. With adaptation, we seek to change the manner in which the application uses the network to balance the application requirements against the capabilities of the network. This can be done either by reducing the degree to which an application uses the network or by supplementing the expected network services with additional services provided in software.

Conductor provides application transparent adaptation. Transparency is possible because applications use pre-arranged and identifiable application-layer protocols to communicate. By intercepting a data stream between the client and server, the protocol (the pattern of communication) and the data itself can be transformed to make it more suitable for transmission. The original protocol (but not necessarily the original data) can be re-constituted for delivery on either end. Many broad categories of adaptations can be provided transparently:

Adaptations can be custom built for specific protocols, user behaviors, network conditions, and user requirements. By deploying adaptations, the user is able to dynamically balance the level of service he receives from his applications against the cost of network communication required to provide that service. Typically this is done by ensuring that only the most important information is transmitted in the most efficient or appropriate manner for a given network.

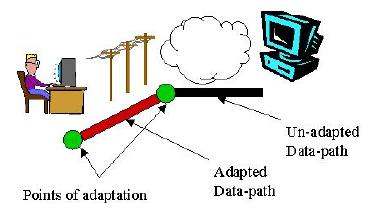

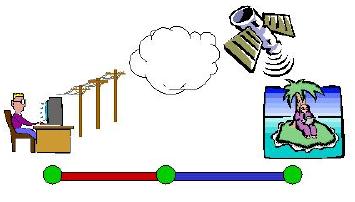

By allowing dynamic proxy placement, a greater variety of network configurations can be supported. The simplest example is a mobile computer that moves between network access points. The point at which a data stream enters the network may change. Therefore, the most efficient proxy location may also change. As another example, consider two mobile peers. If two mobile machines whish to communicate, any adaptation must occur on the two machines themselves. Forcing adaptation to occur on a third, proxy machine can only hinder the communication. In this case, end-to-end adaptation is more appropriate. End-to-end adaptation is also better if more than one network segment has limiting characteristics. Consider a client and server, each connected to a network by modem (see figure below). If each used a proxy to allow compression across its modem link, the compression and decompression algorithms would be executed twice on the same data. Instead, it will be more efficient to compress at one end of the connection and decompress at the far end.

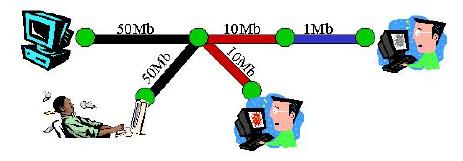

In contrast, there are cases in which it is more appropriate for some adaptation to occur at a mid-point. For example, if all adaptations were end-to-end, poor load balancing would be achieved. Also, some adaptations cannot be composed end-to-end. Consider a server connected over a low bandwidth link and a client connected over a high latency link (see figure below). The client may chose to perform pre-fetching to reduce the apparent latency. At the same time, filtering or compression might be needed for the low bandwidth link. If performed end-to-end, these two activities are counter-productive. Ideally, a proxy in the middle would provide a cache from which the client can pre-fetch, and compression/decompression for the server.

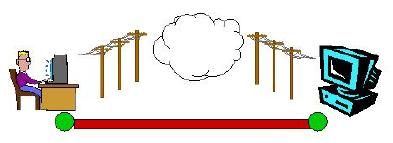

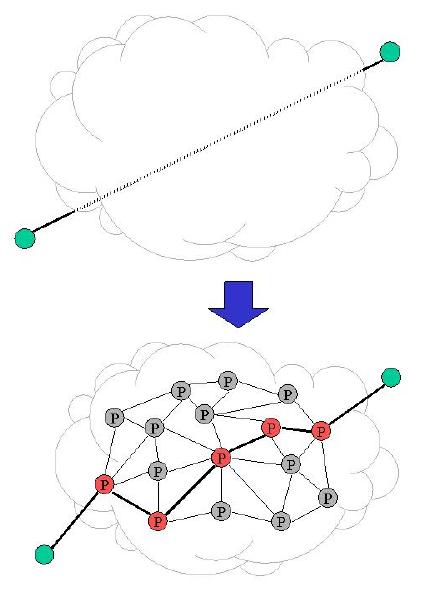

Beyond dynamic proxy placement, it can also be useful to have more than one proxy. Clearly, in a modular system, it is desirable to be able to combine several simple adaptations together. Adaptations can be combined on a single proxy, but adaptor combination is often more effective on multiple proxies. One advantage is proximity. The closer an adaptation module is to the limiting segment of network, the more quickly it is able to react as conditions change. In the extreme, when network routing changes, adaptation executing on proxies along the old route will be automatically removed. Finally, use of multiple proxies allows better sharing of adaptation. Multiple caching adaptors can providing multiple levels of caching (LAN level, corporate level, ISP level, etc) for entire networks of computers. One connection could then take advantage of several proxies, each with a caching adaptor. As a final example, in a multi-casting system, only through the use of multiple points of adaptation can a single source provide multiple clients, each with a different level of connectivity, the highest possible level of data fidelity (see figure below).

The Conductor prototype will use a transparent proxy mechanism to intercept TCP connections (see figure below). A client, attempting to connect to a particular port on a particular server, will instead be connected to the Conductor system. The Conductor system will (eventually) connect to the server on behalf of the client. Thus, Conductor can transparently insert itself into the data stream. The Conductor system, itself, consists of a number of proxy hosts. Each is discovered via a transparent proxy mechanism. When a connection is forwarded through a Conductor-enabled router, the router is selected as a potential proxy node. Data will actually flow over a series of TCP connections, one between each pair of adapting proxies.

Each proxy supports a copy of the Conductor adaptation framework. The framework is responsible for accepting new connections and routing them to their destination. The framework deploys and supports adaptor modules and allows them to provide cooperative adaptations. The framework also provides a set of distributed algorithms for global adaptor selection (planning), reliability, and security.

Each adaptor module conforms to an adaptor API. Each adaptor module is self-descriptive, specifying its uses, capabilities, and costs. An adaptor receives a particular protocol, performs an arbitrary transformation, and produces a (potentially) different protocol. For transparency, adaptor modules are frequently deployed in pairs, converting to a more transmittable protocol with one adaptor and back to the original protocol with a second adaptor.

The Conductor framework located on each proxy must also monitor the local environment to aid in selecting adaptations. It will monitor network interfaces for availability, and levels of latency, bandwidth, and security. It will also monitor the local system for load, storage resources, and security posture. This information will be shared with other proxies to coordinate the selection of adaptors. It is likely that much of this monitoring can occur externally to the Conductor framework itself. We hope to make use of an existing resource monitoring system.

If distributed planning were used, each node might selects its own local adaptations based on local conditions, advertise its local plan, and revise its activities as the global situation becomes clear. With centralized planning, on the other hand, each node would forward its local state to a single node which would formulate and distribute a global plan. Distributed planning has the advantage of being robust to network partitioning. However, distributed planning is slow to converge on a final solution. Since the addition and removal of adaptors are expensive operations, it is preferable to determine the globally optimal solution in one step.

Conductor's planning algorithm is semi-centralized, compromising between centralized planning and distributed planning. Planning is centralized within each (dynamically detected) network partition. Within each partition, a single node gathers status information for every other node in the partition, formulates a plan, and delivers it.

Planning adds overhead in both bandwidth and latency to each connection. Information gathering and plan distribution adds a single round trip to connection setup. Since planning can also be triggered during the life of a connection by changes in routing or link characteristics (beyond what the deployed adaptors can handle), additional overhead is also possible.

Since each proxy sends a moderate amount of state information to the planning node, and because bandwidth tends to be precious when adaptation is required, it is worthwhile to reduce the amount of data transmitted. The state of each proxy is versioned, allowing it to be cached. Transmission occurs optimistically; transmission is avoided if it is likely that the recipient has the data cached. Under the assumption that proxy conditions change infrequently and connections follow regular (and hence overlapping) paths, caching should greatly reduce the amount of data transmitted. For example, many connections between the same pair of hosts over a short period of time (as is common when fetching a web page, for example), should incur very little bandwidth overhead for planning.

Typically, reliable communications implies exactly-once delivery of transmitted data. Since adaptors can arbitrarily change the data in transit, the data delivered is arbitrarily different from the data transmitted (as compared between any two points along the connection path). It is difficult to map exactly-once delivery onto this model. When a failure occurs it is not possible to compare the data received downstream with data sent upstream to determine what must be retransmitted. Only the adaptors themselves know what transformations have been applied. Without further support, The loss of an adaptor (perhaps due to proxy failure), can result in data loss.

To combat this problem, Conductor provides the notion of semantic reliability. As data moves along the stream, it may be changed by adaptors, but it retains the same semantic meaning. If this property is enforced end-to-end, exactly-once delivery of semantic meaning can be provided without requiring state information from each adaptor to allow recovery.

Semantic reliability is implemented using adaptation specified segmentation. Any modification to the data stream must be contained within a segment. To perform inter-segment modifications, two or more segments must first be combined into one. Segmentation preserves the adaptation-specific points of retransmission, allowing adaptor removal at appropriate points in the stream and recovery from adaptor failure.

The client and server could use end-to-end encryption to secure the data stream. However, end-to-end encryption would severely limit the types of adaptations that are possible and would do nothing to solve the problem of secure coordination of adaptations. Instead, link-level encryption is used to grant access to trusted proxy nodes while denying access to all others. However, mechanisms are required for proxy authentication, selection, and key distribution.

To allow wide-spread deployability, Conductor must provide trust between hosts from different administrative domains. At the same time, since adaptation is generally employed when connectivity is poor and costly, authentication and key distribution should have low communication overhead. In particular, authentication of a node must not require an interactive search (perhaps querying a hierarchy of authentication servers) of authentication authorities.

So that overhead latency is minimized, Conductor will provide a security negotiation in parallel with adaptation planning activities. The planning node is normally an end node, and can therefore be trusted (as it will have access to the data stream regardless). Each security negotiation activity can occur in parallel with each planning activity:

| Planning | Security | |

|---|---|---|

| Step 1 | Information Gathering | Authentication |

| Step 2 | Plan Formulation | Node Selection |

| Step 3 | Plan Distribution | Key Distribution |

Conductor authentication is certificate based. During authentication, each node will send a verifiable certificate containing its identity and public key to the planner. So that no round trips are required, the planner must receive all of the certificates necessary necessary to verify the public key of each node. So, certificates forming either a certificate authority hierarchy or a chain of trust (both mechanisms are supported) must also be provided. These certificates can thus be verified locally at the planner during node selection. The verified public keys can then be used to ensure secure key distribution. A similar mechanism is also used to provide authentication of distributed keys. Session keys can then be used to protect both the data stream and adaptation control mechanisms.